Energy: The bottleneck on intelligence growth

What are the consequences of energy on the intelligence era

Nvidia recently published AI is a 5-Layer cake1. Today I make a case for the energy layer being the binding constraint on intelligence growth and explore what the consequences are.

Human civilisation’s progress is a consequence of our ability to harness tools, whether it was hammers, fire, horses, the printing press, telephones, light bulbs, steam engines, radios or AI. These ‘tools’ are ways in which human beings can leverage energy into productivity.

At a base level we are improving human productivity by capturing energy and directing it with tools towards our goals.

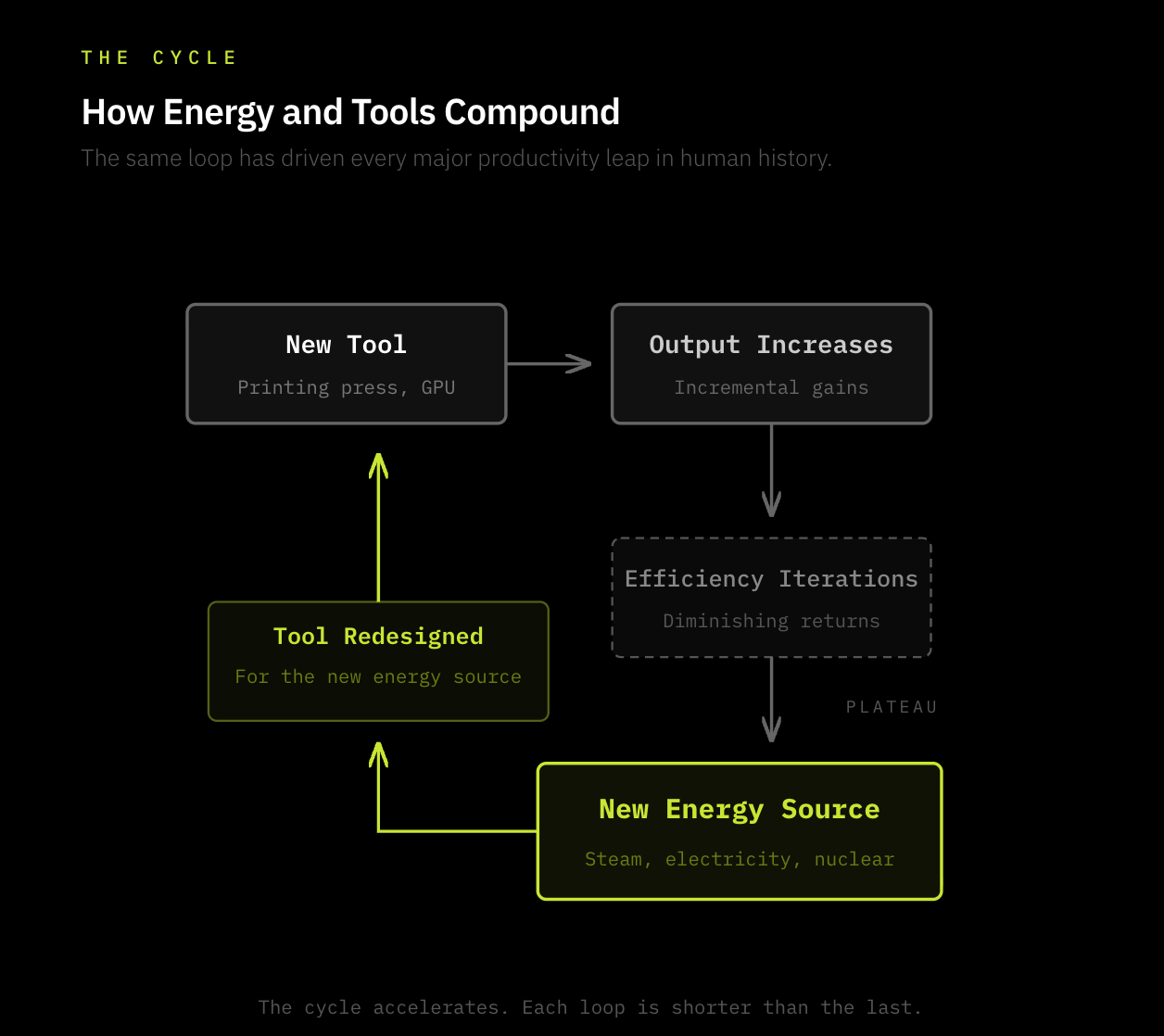

Put simply, the advancement of human civilisation at its core looks like:

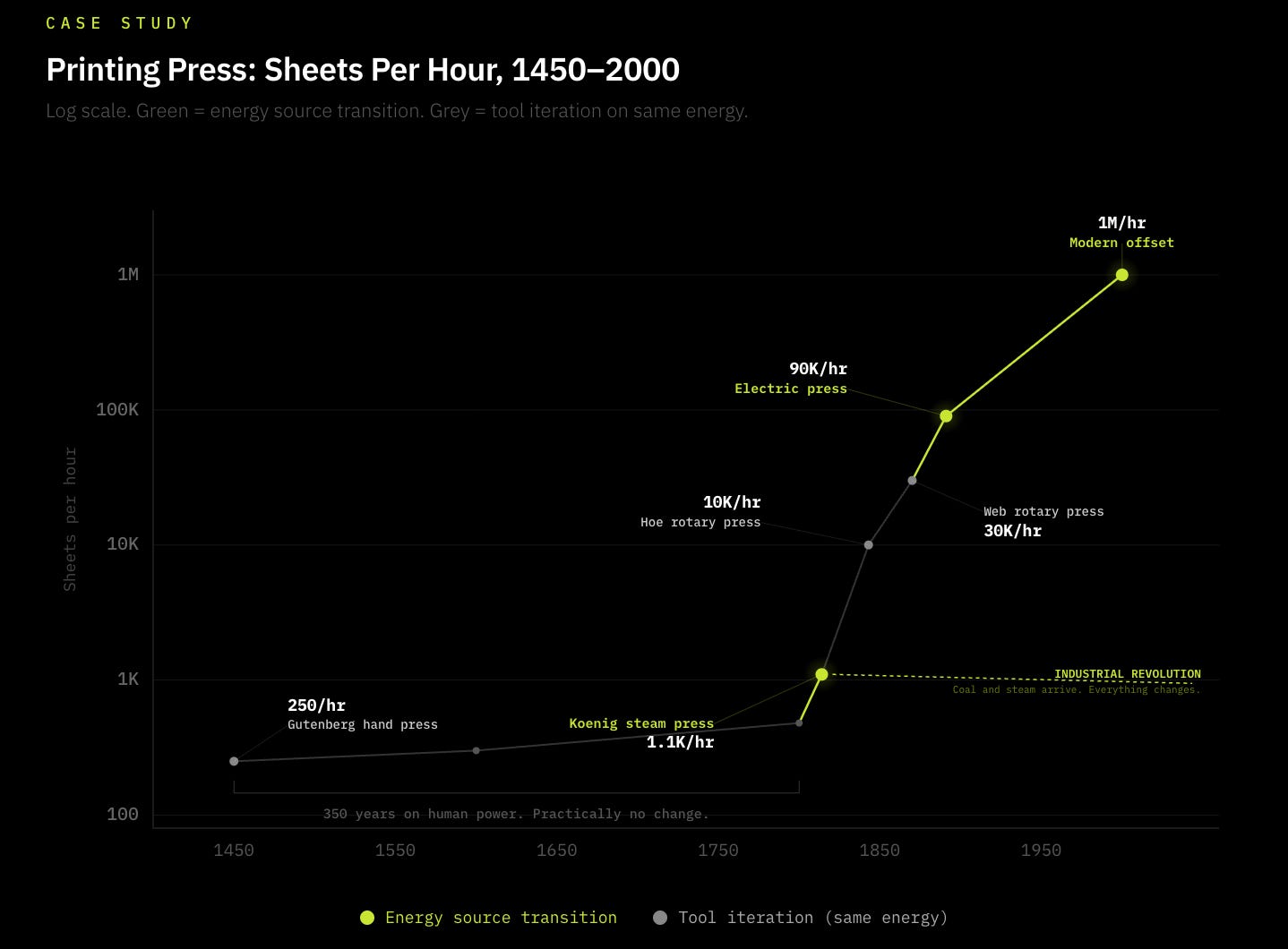

For the majority of human history, humans relied on Human Energy and their hands as tools as a way of advancing our aims; whether it be farming, or writing. A great example of how energy & tools have progressed is the printing press, popularised in 1440 by Gutenberg2. Before this innovation, humans used their own energy to wield pens (the tool) to write information by hand: highly inefficient. The printing press innovated a new tool improving how well we direct human energy by using a press to mechanically imprint words onto paper. And so the tool side of the equation improved productivity by orders of magnitude.

However, there were practically no real innovations in the printing press from 1450 to 1800, almost 350 years. That was until humans harnessed a more powerful energy type, coal, to improve the energy side of the equation. In 1814 Friederich Koenig invented the steam-powered printing press3, which adapted the press to the prevailing energy innovation of coal, increasing efficiency by 5x. Following this, the printing press efficiently adapted to the new energy source increasing output to from 250/hr to 30,000/hr 50 years later and millions today.4

And so exists the continuous process of innovating new tools, making breakthroughs in harnessing energy, and improving the efficiency of those new tools in relation to the energy available. Today Intelligence is the new form of productivity we’re focusing on, and energy is its fuel. Crucially, the rate at which we can continue to progress intelligence growth is dependent on how much sustainable, reliable energy we’re able to produce to power our tools (GPUs) and direct them towards our ends (intelligence).

This thesis overlaps with the Kardashev scale; a method of measuring a civilisation’s technological advancement on how much energy it can harness. From planets, to stars to galaxies to universes to the multiverse, how much energy we can harness indicates how far we’ve progressed as a civilisation. Historically, this has remained true, and it’s no different going forward. Our ability to harness energy is fundamental in progressing civilisation.

The crux of this piece is arguing that energy demand is rapidly outpacing supply and is the primary bottleneck in advancing intelligence, and I explore the primary & secondary effects of this thesis.

Why is energy supply slowing?

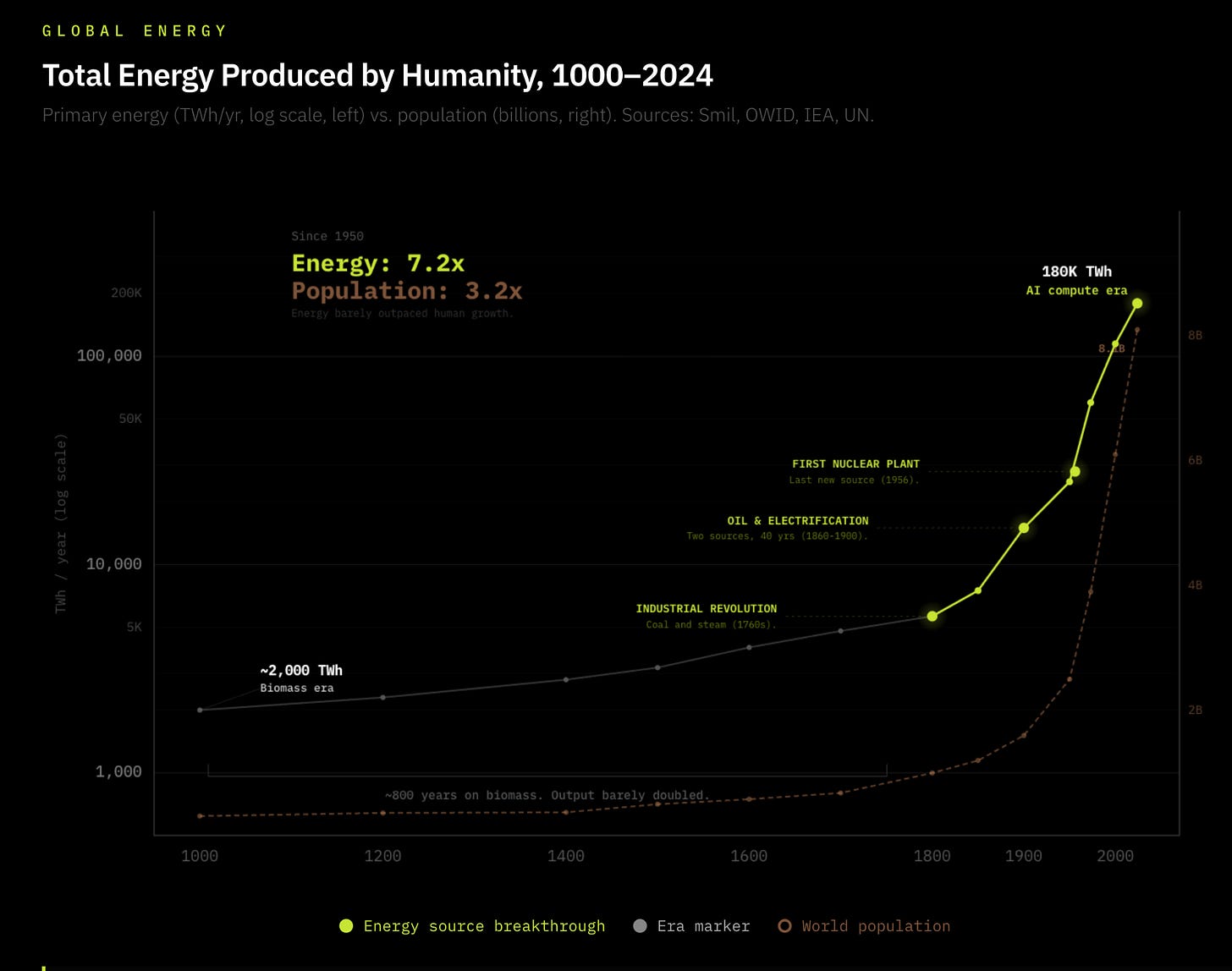

Nuclear fission was discovered in 1939, and remains the last remarkable shift in energy we’ve established since the dawn of humanity. However, given Chernobyl and a global commitment to shift away from nuclear power to renewable energy, we’ve seen a clear mismatch in tool innovation vs. energy advancement since 1950. In 1950 the world was producing 2,600GW of energy vs. 19,000 GW of energy today (7.3x)5. This may seem a leap, but this gradual linear growth far underweighs the growth of modern computing & technology, and barely outweighs population growth, which grew 3.5x over the same period.6

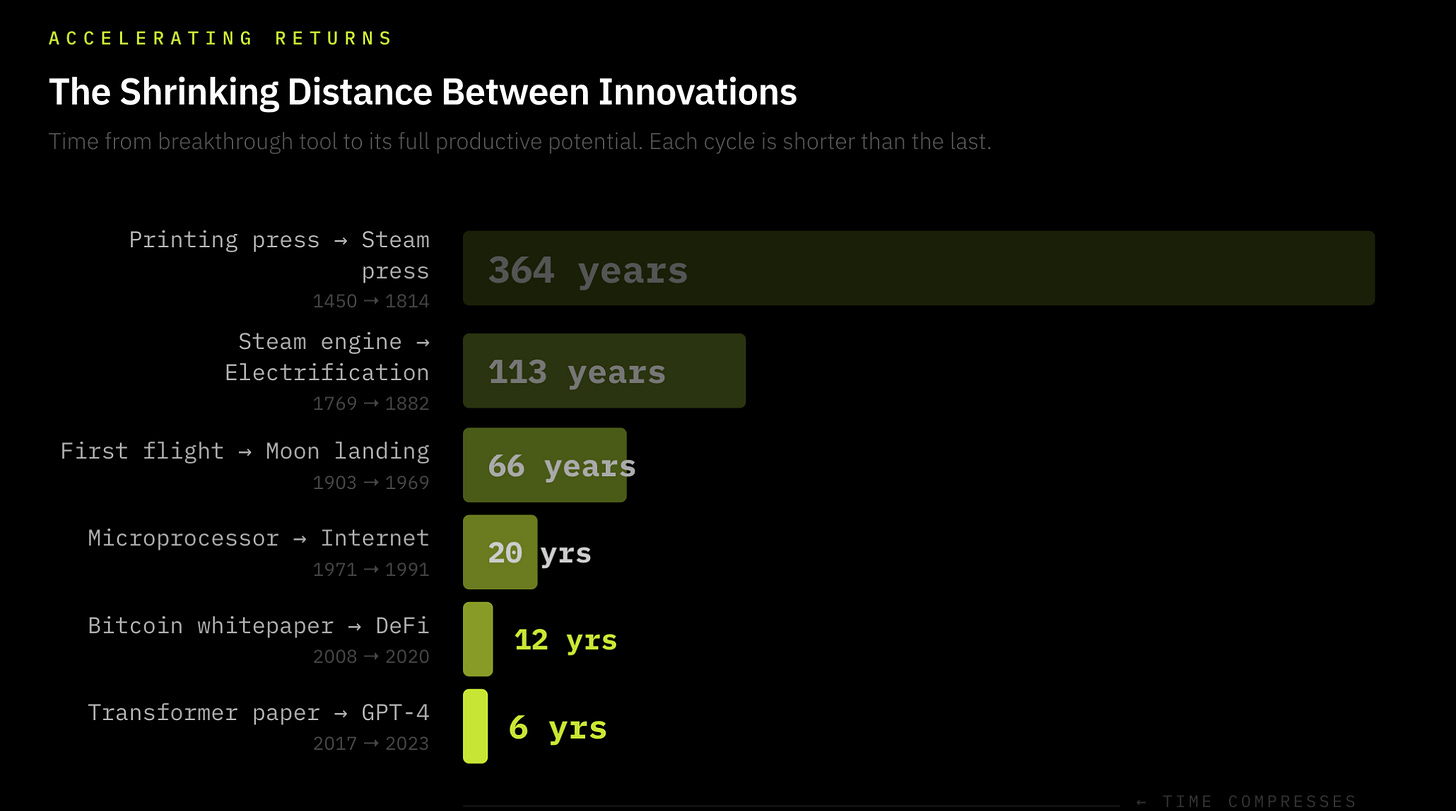

In comparison, the distance between quantum leaps of tool innovations is shortening. The time between the first printing press & it’s next major adaptation was 364 years, the first flight to space travel was 58 years7, the first microprocessor to the internet was 20 years8 and today new leaps in GPUs occur every 2 years. We’re living in ever-shortening windows of accelerating tool efficiencies, so much so that multiple innovations are overlapping in continually quickening cycles of increasing tool innovations. From AI to to cryptography to quantum computing, new innovations are being discovered faster, and the advances in their efficiencies are ever-quickening: The law of accelerating returns.

Data centers today sit at 1.5% of global electricity consumption, projected to hit 3% by 20309 covering in 6 years what the steam engine took 50. The key difference between the Industrial Revolution, and the current intelligence explosion is the Industrial Revolution built its own energy supply in lockstep with demand: coal mines, canals, and rail networks expanded alongside the machines consuming them. Every prior energy revolution built its supply chain as it scaled; AI inherited one, and it’s already breaking it.

The energy grid is simply not prepared for an intelligence explosion demanding 15% YoY consumption growth all whilst US power demand growth has been zero for the last decade10. Cracks are already appearing in the US with record-long grid connection queues11, large transformer delivery times already average 24 to 36 months, with power transformers facing a 30% supply deficit in 202512. Morgan Stanley estimates the US alone faces a 45 gigawatt power shortage by 2028, equivalent to the electricity needs of 33 million American households13. I think that deficit could be far greater.

The problem seems clear: Humanity needs to scale energy aggressively to keep up with innovation leaps in AI, Robotics, Autonomous travel & more.

The primary & secondary effects of an impending energy deficit

The consequences of the impending energy deficit is historical; as the demand for energy skyrockets, and supply is underwhelming, we’re likely to see quasi-privatised energy markets.

We’re already beginning to see this with Hyperscalers embedding their own behind-the-meter energy generation, with plans to expand this to nuclear-powered data centres. I believe this trend is only going to become more pronounced.

Below I present 7 Theses, all of which are a derivative of the intelligence explosion and its effect on an ever-strained electricity supply.

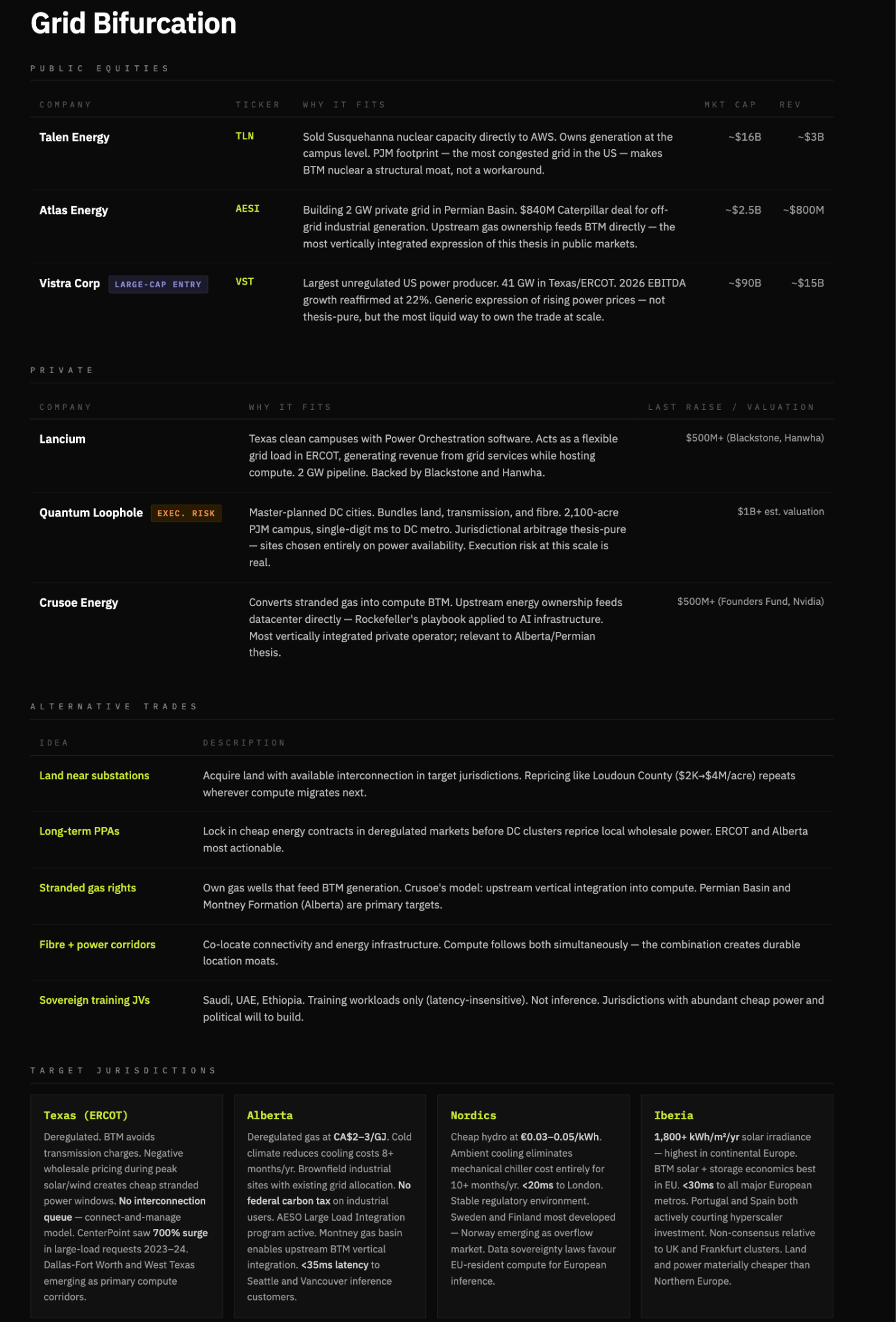

Thesis 1 Grid bifurcation: Compute will migrate to power, not the other way around.

Power-rich, regulation-light jurisdictions in proximity to inference demand will capture disproportionate value as energy systems fragment.

As energy demand begins to outpace supply, electricity becomes politically sensitive. Households vote, data centres don’t. In an energy deficit, grids are unlikely to remain neutral but rather prioritise residential demand over industrial through pricing, access restrictions or soft caps.

Given how sensitive compute is to latency, uptime and reliability, it wouldn’t be feasible to operate in jurisdictions that prioritise households. As grid access becomes volatile or politicised, compute workloads shift off-grid into behind-the-meter (BTM) generation, where power can be secured, controlled, and priced directly.

This drives a structural shift where compute migrates to power rich, jurisdiction light economies. The winners are those that can bundle land, interconnection, energy generation, and fibre into deployable and repeatable system. And the jurisdictions these operate also stand to benefit.

Thesis 2: Energy becomes a competitive moat; BTM energy generation becomes a core competency which differentiates compute providers from one another.

This, to me, is the most crucial first-order effect of increasing energy deficits. In a world where energy requirements are running higher than supply, access to cheap, reliable power is a structural cost advantage that compounds over time. Not only that, but the concept that data centre’s are taking priority in grid electricity is a politically untenable idea, which is the trajectory energy is on. Furthermore, the increasing strain on nationally supplied grid electricity will force compute providers to provide their own electricity; a trend we’re beginning to see with hyperscalers. Infrastructure that doesn’t have BTM generation will simply be left behind.

In essence, the companies that own their power win. The companies that rent it lose. Without BTM generation compute providers are faced with power reliability issues (fatal), rising costs, and power restrictions. Pure colocation REITS (equinix, Digital reality) without owned generation become less valuable relative to vertically integrated operators. The companies combining energy generation with compute hosting are building the deepest moats (Crusoe, Iren & select hyperscalers). This could be expressed in a long/short trade, but I prefer highlighting integrated winners here.

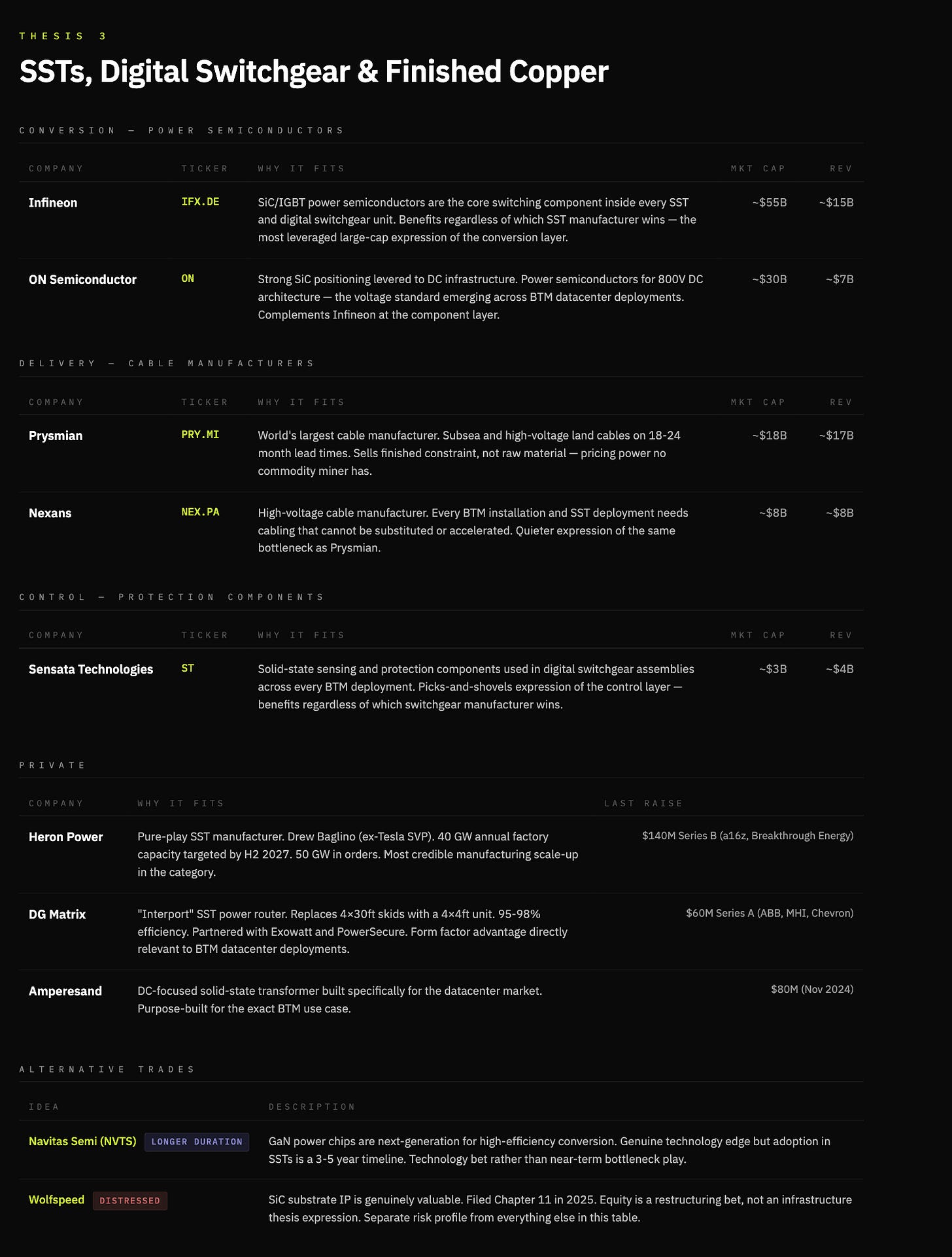

Thesis 3: BTM standardisation results in innovation from traditional transformers to solid-state transformers, and switchgears to Digital switchgears.

Conventional transformers step up or step down voltage from AC grid electricity. Given their scale and materials, lead times are hitting 24-36 months with a 30% supply deficit. They’re also a piece of an 1880’s technology hand-built around constrained materials. Crucially, every megawatt of BTM generation has to be converted, regulated and delivered to compute; there is no workaround to transformers

A solid-state transformer replaces this with high-frequency power electronics. It’s smaller, faster, fully controllable handling AC-DC conversion, voltage regulation and bidirectional flow in a single unit. They are also easier to manufacture relying on silicon power semiconductors (SiC/GaN) rather than massive copper windings and oil filled tanks. As BTM becomes the standard architecture, the device sitting between energy and compute becomes the bottleneck. That device is the SST.

Switchgears have also seen 80 -week delays 14and are the control layer between generation and load routing power, isolating faults and protecting the system. Like transformers, switchgears are also a labour-intensive product built around constrained materials virtually unchanged since the 1880’s.

Digital switchgears replace this with solid-state power electronics. Faster, programmable, and fully controllable, they enable real-time fault detection, remote isolation, and dynamic load routing. Just as importantly, it scales like electronics rather than industrial equipment.

As an aside on Copper. My view is constructive on copper; copper is the highway of electrons and will be the primary commodity required in an increasing electrified world. However, the expression of this trade is nuanced; traditional miners as a trade experience low margins, which can compress over time. However, the finished products where copper is non-substitutable and time constrained is a significant bottleneck, and area of future value accrual. Cable manufacturers like Prysmian and Nexans sell finished constraint, rather than raw materials, and with lead times on transformers widening aggressively, this is no longer a commodity market.

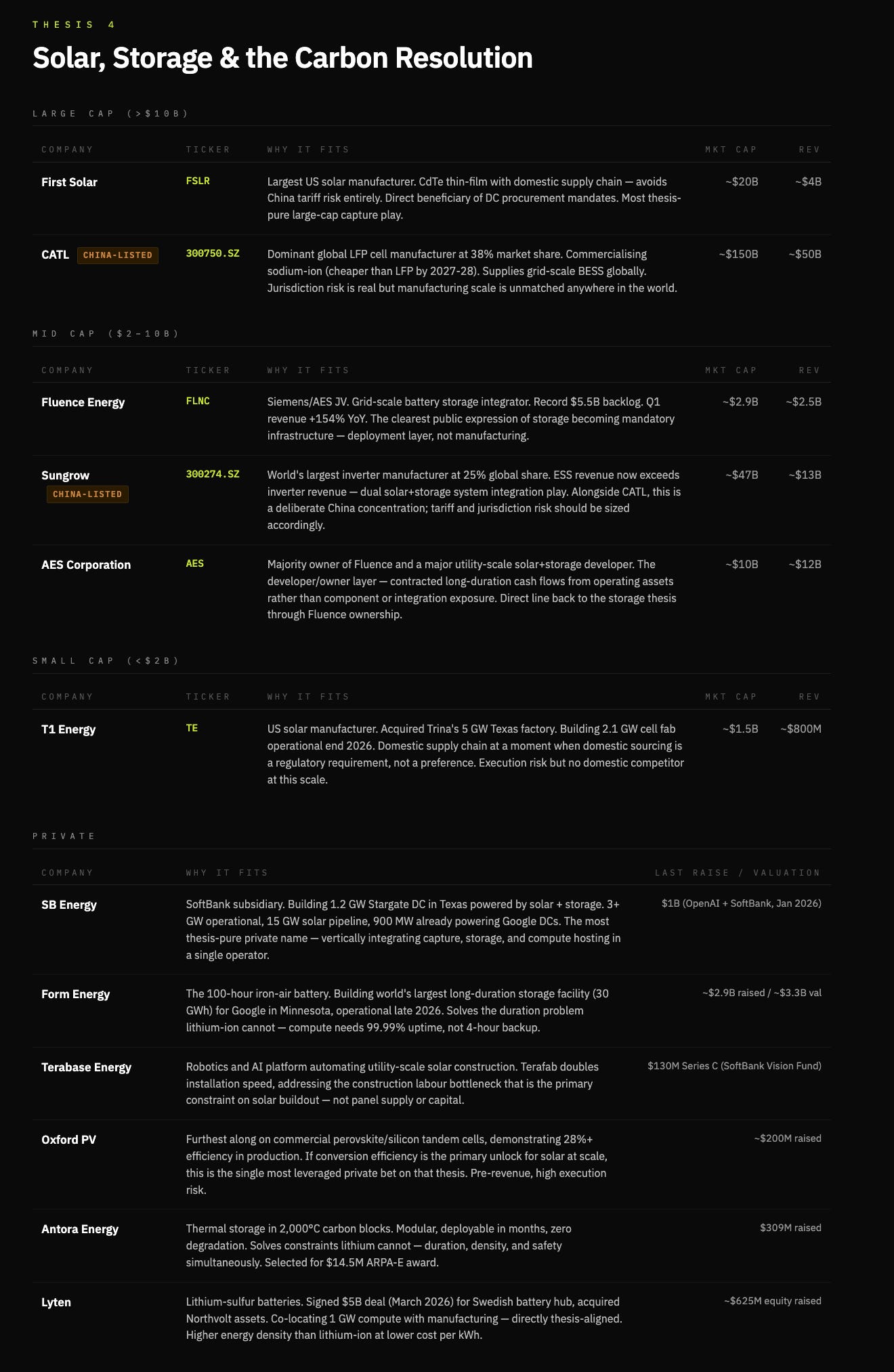

Thesis 4: The carbon cost of AI becomes politically untenable, forcing a primarily solar & battery resolution.

The AI buildout has a carbon problem it hasn’t priced yet, which is a political constraint. Data centres raise power prices, consume water at scale, and increase local emissions. This is already visible with $18bn of data centre projects cancelled outright and $46bn delayed15.

Today, ~56% of data centre electricity comes from fossil fuels16. Gas has solved for speed of deployment, but it is politically fragile. As demand scales, resistance to fossil-based expansion increases, forcing a near-term hybrid system of gas, nuclear, and renewables.

While gas has acted as a short-term bridge in the explosion of data centres, over longer time horizons, energy abundance is not solved by fuel extraction, but by energy capture. The sun delivers orders of magnitude more energy to Earth than humanity consumes. The constraint is not availability, but conversion, storage, and deployment.

Solar is not the immediate solution to compute’s energy demand, it is the terminal one.

Current commercial solar captures ~22% of incoming energy17. Each incremental improvement in conversion efficiency reduces the cost per delivered megawatt, pushing solar closer to parity with dispatchable generation in BTM systems.

Battery storage becomes a core component of this architecture. Not just to smooth intermittency, but as a revenue layer. Storage arbitrage and load balancing convert what was historically a cost centre into a margin contributor for BTM operators.

Winners on this thesis vertically integrated, capture, storage, and delivery: purpose build solar developers with BTM contracts, battery manufacturers with grid-scale and site-level products, and the handful of operators who can combine owned generation with compute hosting.

Solar is a procurement and manufacturing game, batteries are the constraint and monetisation layer, integration captures margins, and frontier tech remains optionality, not the base case. In this respect, Tesla may continue to be the big winner here, but I’ve kept selections to non-consensus picks.

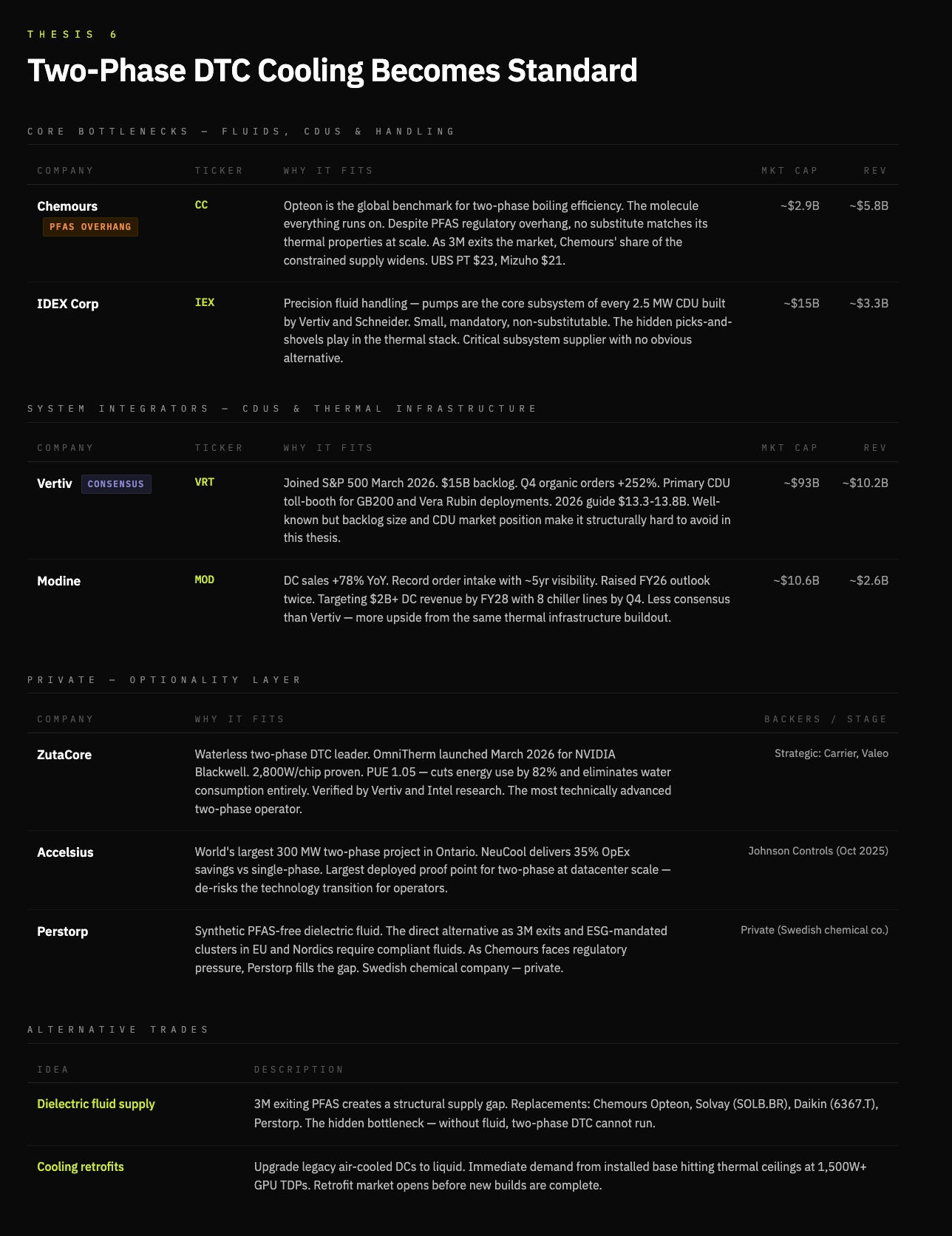

Thesis 5: Cooling becomes a first-class constraint; two-phase D2C cooling becomes necessary at the frontier

A further consequence is the emergence of Two-phase Direct-to-chip cooling; now admittedly, this thesis is also mixed in with my own view that chip power density is on a parabolic trajectory that is an increasingly problematic thermodynamic equation. Traditional air cooling is simply untenable for several reasons, primary of which it doesn’t work with higher density chips, on top of the environmental issues of water & power consumption.

Firstly, D2C cooling pushes density & performance without being constrained by managing heat, a key issue in scaling. The current market reality is single-phase dominance given it’s simplicity; it runs cold water through a cold plate and cools down the chip, but it has a known ceiling. The transition to two-phase will become essential when chip power densities rise past 1,500W. Two phase is designed to pump dialectric fluid around the chip and is engineered to boil at low temperature; this hase change from liquid to gas dramatically increases the efficiency of cooling.

Two-phase cooling delivers a 20% decrease in energy use & 48% reduction in water consumption18. This improvement in performance allows for denser chiplet packaging, increasing performance, which ultimately demands high performant cooling.

Zutacore, a leading two-phase DTC company, has demonstrated two phase D2C cooling that uses dialectic fluid rather than water cutting energy consumption by 82% and eliminating water consumption19; they were verified by Vertiv & Intel’s research. Zutacore is an interesting private operator here, and to go a step further, it may be worth digging into dialectic fluid providers.

Thesis 6: Nuclear can act as a bridge to energy abundance, and consistent power delivery; but is not the long term answer to energy expansion.

Whilst writing this article, I initially thought that Nuclear was a great way to cover short-term gaps in energy deficits. The reality is, the cost of deployment for an SMR is 5-10x that of a comparable natural gas system ($10-15k/kw)20, which in reality is untenable for widespread deployment & scaling.

Rather, Nuclear solves for reliability, not speed or cost, particularly when installed BTM. This allows for stable, dispatchable baseload power where reliability is non-negotiable. Therefore, Nuclear has a role to play in the energy deficit, as a bridge rather than a core offering.

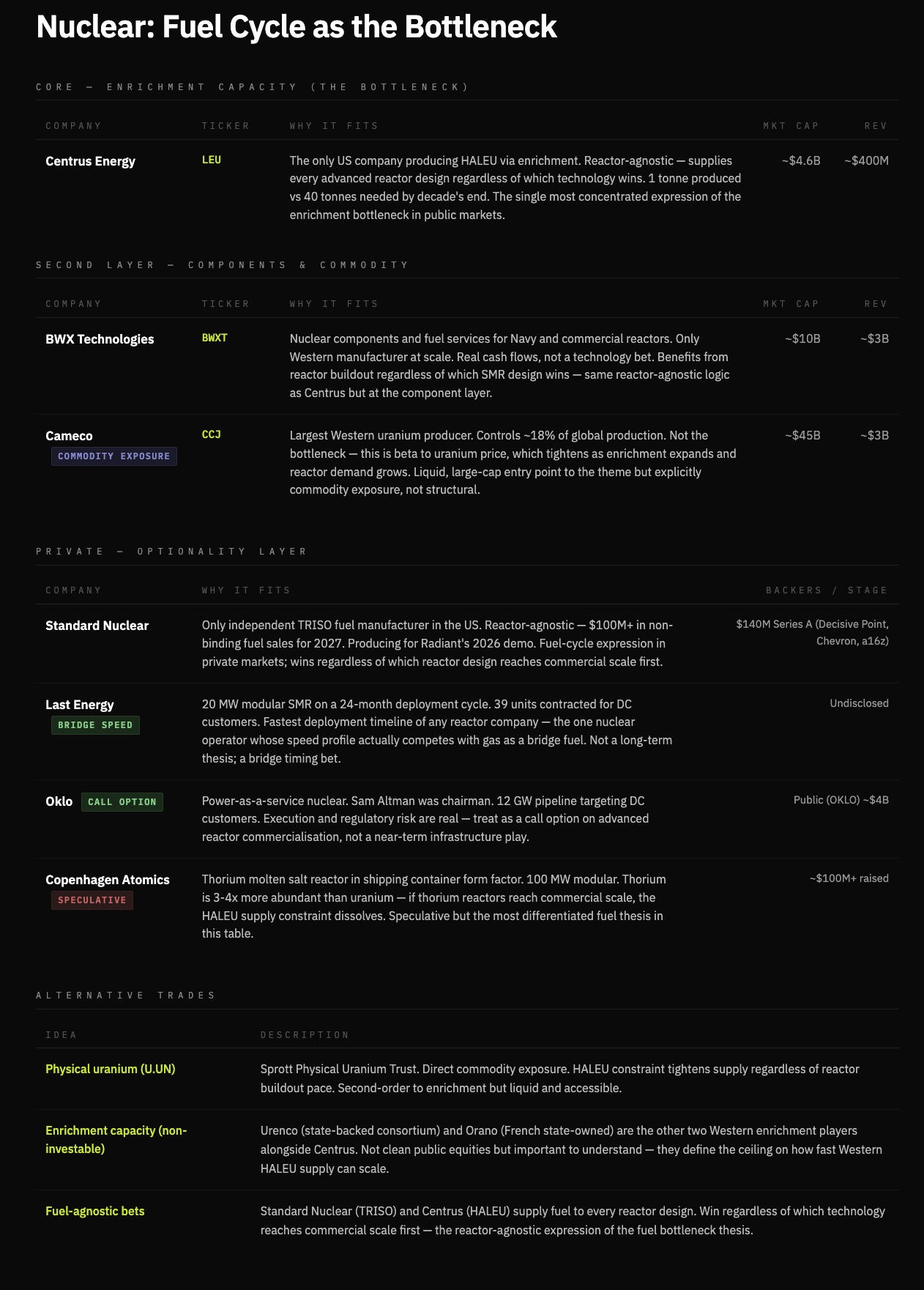

Nuclear is constrained by its fuel cycle, as well as build time. Advanced reactors today require HALEU, a fuel that has amost no commercial-scale supply today21. Even if reactors are built,the ability to fuel them becomes the gating factor on how quickly nuclear can scale.

Nuclear, therefore, is unlikely to be the marginal solution to energy expansion; it’s slow to market, capital-intensive and constrained by infrastructure and fuel. In contrast, the systems that scale fastest, gas in the near term, solar & storage in the longer term are the options that close the gap.

The investable bottleneck is not reactors, but fuel. As SMR demand scales, HALEU enrichment becomes the gating layer, a reactor-agnostic choke point where value accrues regardless of which design wins.

Thesis 7: A new class of energy-infrastructure conglomerates emerges; the vertical integrators refining electrons into compute

The bottleneck in AI infrastructure is not only energy, but the ability to convert energy into usable compute at scale.

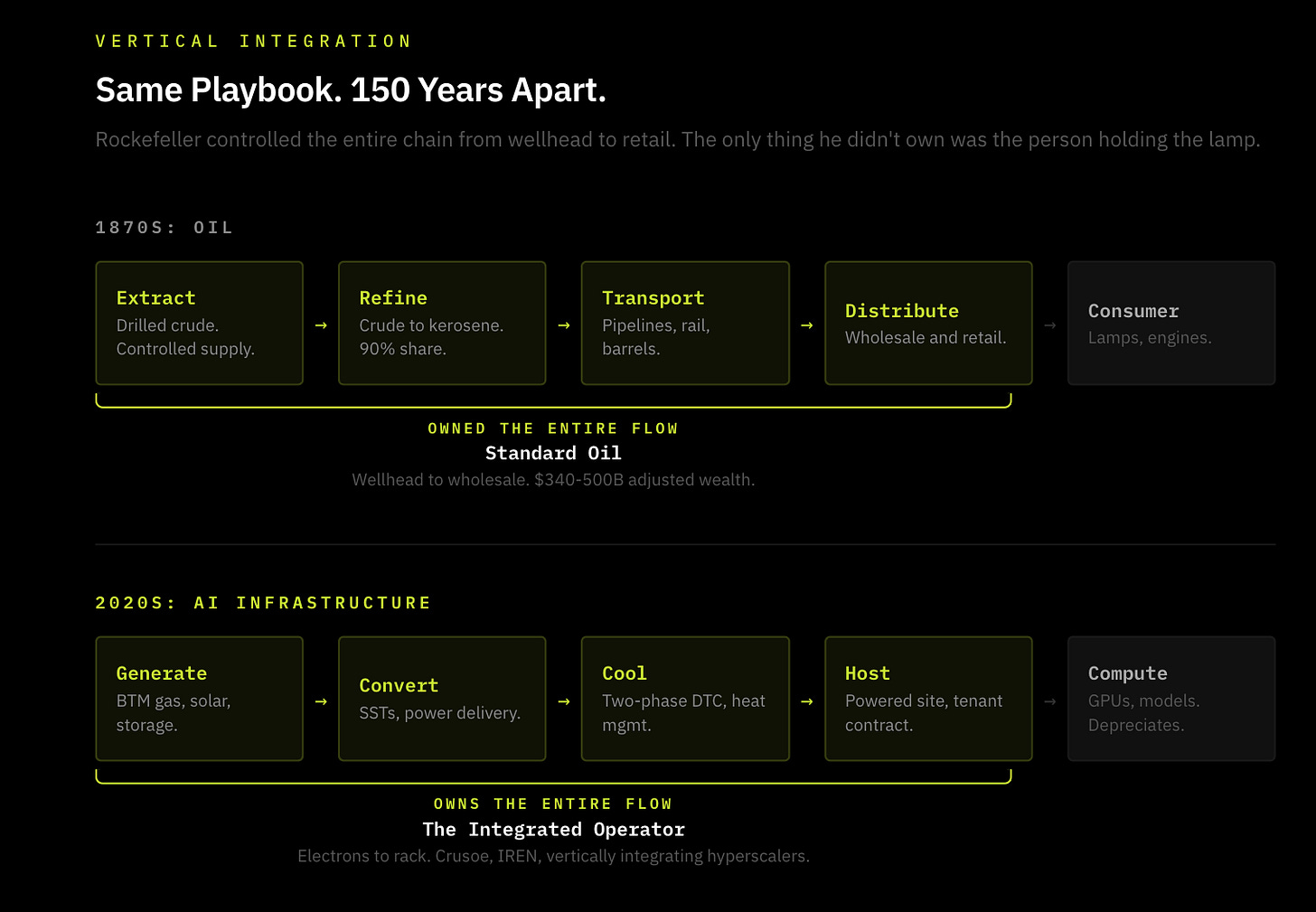

In the 1870’s, much like electricity, oil wasn’t scarce but the refinement and distribution were. The vertical integration of extracting crude oil, refining it, and distributing it to households was how Rockefeller built one of the largest companies of all time (standard oil).

The intelligence revolution follows the same pattern; electricity is crude oil. There’s plenty of electricity but reliably converting it into compute with power delivery, cooling, connectivity and permitting are the constraint. The refinement of electrons is where the value lies. Each additional layer owned increases reliability, reduces cost, and captures margin, making vertical integration self-reinforcing.

Hyperscalers are the distribution layer in this system and the endpoints where compute is consumed. However, the structural opportunity is in owning the infrastructure the distributor is forced to buy from. This creates a new class of energy-infrastructure conglomerates, operators that control generation, conversion, cooling and hosting in a single stack.

The clearest expressions are vertically integrated operators like Crusoe and Lancium in private markets, and power native compute platforms like, Iren and core Scientific in public markets, which already own the hardest layer to replicate; energy.

The companies that control the flow of electrons to rack are building the deepest moats in the AI economy. Software can’t eat physical infrastructure.

https://blogs.nvidia.com/blog/ai-5-layer-cake/

britannica.com/biography/Johannes-Gutenberg

britannica.com/biography/Friedrich-Koenig

The Printing Revolution in Early Modern Europe.

bp.com/statisticalreview

https://www.population.un.org/wpp

Wright Brothers first flew in 1903. Yuri Gagarin went to space in 1961

Intel 4004 (first microprocessor) launched in 1971. The World Wide Web launched in 1991 = 20 years

iea.org/reports/energy-and-ai/energy-demand-from-ai

woodmac.com/press-releases/power-transformers-and-distribution-transformers-will-face-supply-deficits-of-30-and-10-in-2025/ (cited in context) Also: eia.gov/todayinenergy/detail.php?id=67344

iea.org/reports/energy-and-ai/energy-demand-from-ai

woodmac.com/press-releases/power-transformers-and-distribution-transformers-will-face-supply-deficits-of-30-and-10-in-2025/

morganstanley.com/insights/podcasts/thoughts-on-the-market/data-center-financing-vishy-tirupattur-vishwas-patkar-carolyn-campbell

woodmac.com/news/opinion/mind-the-gap-tackling-supply-chain-challenges-in-the-electric-td-sector/

datacenterwatch.org/report

arxiv.org/html/2411.09786v1

nrel.gov/pv/cell-efficiency.html

Using life cycle assessment to drive innovation for sustainable cool clouds” — Microsoft & WSP Global researchers, published in Nature, May 2025

zutacore.com

world-nuclear.org

energy.gov/ne/nuclear-reactor-technologies/advanced-reactor-demonstration-program/haleu-availability-program